We shall start with a bit of history:

- 2016: Building a Low Power PC on Skylake – 10 watts idle

- 2019: 9w Idle – Creating a low power home NAS / file server with 4 Storage Drives

- 2021: (no write-up) – 11 watts using an Intel i3-10320 on a Gigabyte H470M DS3H

Not all my systems have been so successful. In 2022 I measured a couple other systems at 19 watts and 27 watts as part of Curbing the “Gas-Guzzling” tendencies of AMD Radeon with Multi-Monitor . While I did manage to get that 27 watt AMD system down in power some time later, not every CPU/motherboard combo is destined for the 10 watt ballpark.

—

Before going further, the 7 watt figure for this system was before storage has been added. The 7 watts (measured at the wall) includes:

- Motherboard (Intel H770)

- CPU (Intel i5-12400)

- 64GB RAM

- SSD (booting Ubuntu Server 23.04)

- PSU (Corsair)

- C-States set up in BIOS so that it reaches C8

- powertop with auto-tune (which disabled the USB keyboard when the port went to sleep)

Note that if I don’t allow powertop to disable the keyboard, I get 8 watts measured at the wall.

—

Let’s get into detailed specs and component choices. This time around I had the following goals:

- low idle power

- reasonable CPU performance for compression

- able to handle 12 hard drives + at least 1 NVMe

- capacity to (eventually) convert those 12 hard drives to 6 NVMe + 6 SSD SATA

- keep costs under control – since a motherboard purchase would be required, try to stick with DDR4 and reuse a CPU I already have.

Putting together a new system with the hopes of getting in the ballpark of the 10 watt range *measured from the wall* is often not only a challenge, but a bit of a gamble. Sometimes you just have to take your best educated guesses in terms of components, build your rig, tune what you can, and let the chips fall where they may.

Motherboard – ASUS Prime H770-Plus D4

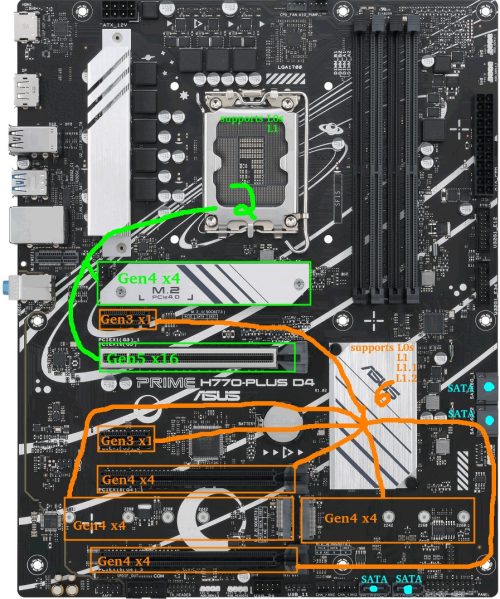

Before I begin, here is a quick look at the motherboard layout. The GREEN CPU-connected slots and ORANGE chipset-connected slots will become relevant throughout this write-up.

At the time of writing, widely available consumer options were motherboards in the Intel 600/700-series and AMD 500/600-series.

One of my goals above was the capacity for an eventual 6 NVMe drives.

Digging into deeper details as to why this can be a challenge (feel free to skip this section)…

Problem: There are 0 consumer motherboards with 6x M.2 slots that can all be used at the same time in PCIe mode. On AMD the MEG X570S Unify-X Max *looks* like it does, but check the manual and you’ll find that if you try to populate all 6, the last one has to be a SATA variant. The ASRock Z790 PG Sonic also has 6 slots, but you can only use 5 of them (with a legitimate excuse: they offer a Gen5 NVMe slot but it comes with an either/or caveat).

Why This Problem Exists: There are chipset lane limitations on consumer boards. Assuming I want the ability to run all M.2 in Gen4x4 and assuming a manufacturer were actually willing to devote all the lanes to M.2 NVMe slots (they’re not), AMD X570 and Intel B760 would max at three M.2 slots, with AMD B650 and Intel H670/Q670/Z690/W680 managing four. Five M.2 slots is possible on AMD X670 and Intel H770 boards. Six on a Z790 board. Beyond that, extraordinary measures like robbing the main PCIE slot of lanes would be required. If sheer M.2 count were desired, manufacturers could run theoretically run lanes in Gen4x2 or add some Gen3 M.2 slots, but at that point they’ve created a *very* niche product.

The Solution: PCI-E to M.2 adapters became necessary. Now when searching for a motherboard, it became a matter if adding the M.2 slots included to any available PCI-E slots capable of x4 or higher. My options were now limited to AMD X570, Intel H770, and Intel Z790 motherboards. Note that while using bifurcation is a possibility on some motherboards to get more than 1 NVMe out of the main PCIe slot, I decided not to rely on it.

I decided to go the Intel route for a few reasons:

- Chipset TDP: 600/700-series Intel chipsets all have a 6W TDP, whereas the TDP of the AMD X670 chipset is pretty high (7w+7w). AMD chipset power consumption has concerned me for a while, as previous X570 chipsets had a TDP of 11w and needed a fan.

- Chipset Speed: Intel H670/Q670/W680/Z690/H770/Z790 chipsets have a DMI 4.0 x8 link to the CPU. AMD X570/B650/X670 have a PCIe 4.0 x4 link to the CPU. Theoretical throughput on the Intel should be twice as much as AMD (16GB/s vs 8GB/s).

- I already had 64GB of DDR4 that the Intel system could use. AMD 600-series chipsets are all DDR5-only.

- I already had an Intel 12th Gen CPU.

- I’ve yet to see any positive discussion around AM5 power consumption. At all. Update: as I was writing this, news actually came out about AMD 7000-series CPUs burning/bulging where the motherboard socket pins meet the CPU. Yeah, sorry AMD, not this time.

So Intel it was. After checking out available DDR4 motherboards on the market, I quickly narrowed options to 2 manufacturers: MSI and ASUS.

Don’t care about the board comparisons? Feel free to skip this.

The enticing MSI boards were the PRO Z790-P WIFI DDR4 and Z790-A WIFI DDR4. Nearly identical on the surface, except the “A” is a little more premium (audio, rear ports, heatsinks, power phases, etc). Pros/cons:

- Pro: 4x M.2 (Gen4x4) + 1x PCIE Gen5x16 + 1x PCIE Gen4x4 supports a total of 6 Gen4 NVMe

- Pro: 2x PCIE Gen3x1 extra

- Pro: 6 SATA ports

- Con: Intel 2.5G LAN (known to be problematic and buggy)

- Con: I’m not a fan of the MSI BIOS

- Con: My current B660 board that results in higher idle consumption than expected is an MSI.

Attractive ASUS options were the Prime H770-Plus D4 and Prime Z790-P D4 (optional WIFI edition). Getting into the TUF, Strix, or ProArt was just too expensive.

I’ll start by listing pros/cons for the H770-Plus:

- Pro: 3x M.2 (Gen4x4) + 1x PCIE Gen5x16 + 2x PCIE Gen4x4 supports a total of 6 Gen4 NVMe

- Pro: 2x PCIE Gen3x1 extra

- Con: Only 4 SATA ports

Pro: 2.5G Realtek Network Adapter (preferable to Intel 2.5G LAN these days)(see comments)The Z790-P D4 is similar except it has more power phases, better heatsinking, more USB ports, extra fan header, and for our purposes…:

- +1 PCIE Gen4x4

- -1 PCIE Gen3x1

Ultimately the ASUS Prime H770-Plus D4 was about $100 cheaper at the time and is what I chose.

One upside I’ve found with “cheaper” boards is they tend to have fewer components and thus less vampire power drain at idle, though this isn’t always a certainty.

CPU – Intel i5-12400 (H0 stepping) – Alder Lake

I already had this CPU as part of a previous desktop build. At the time it was chosen for the desktop system because:

- it had AV1 hardware decode

- it had the highest performance available from the Intel lineup of the 12th generation that avoids the E-core silicon overhead

- in that build, I was getting a new motherboard with 2xDP anyway, and going older-gen didn’t make sense to me.

That desktop build turned out to be a disappointment, and ranks as one of my least favorite builds.

Some details…

I had issues where sometimes only 1 of 2 DP-attached monitors would wake in Linux which meant I had to either pull/reconnect the other DP connector, or manually suspend/resume the system so it could try again.

Another issue was that rebooting between Windows/Linux sometimes caused odd issues which necessitated a full poweroff/restart.

Hardware decode on Ubuntu using Wayland is still problematic and when programs tried to use it to play video, problems would ensue.

Finally, unlike my previous Intel systems which could all be brought down near the 10 watt mark, this one was idling at 19 watts, though I suspected the MSI motherboard I was using may have been a factor.

Most of the headaches I experienced were related to the GPU and display. Since I was about to build something server-oriented, that was no longer a factor.

MEMORY – 64GB DDR4-3200

Here’s what I used:

- 2x16GB Kingston HyperX dual-rank (Hynix DJR)

- 2x16GB Kingston HyperX single-rank (Hynix CJR)

This was memory I already had. I ran the 4 sticks of memory at the XMP profile of the dual-rank kit which was 16-18-18-36. Everything else was essentially left to the defaults except that I ran the RAM at 1.25 volts (higher than stock 1.20, but lower than the XMP 1.35v setting). TestMem5 and Memtest86 showed stability at 1.22v, though testing this memory on previous motherboards had shown 1.22v to be unstable, so for a little extra buffer when it comes to stability I boosted the voltage to 1.25v.

Boot Drive – Sandisk Ultra 3D 1TB SSD

This component wasn’t deliberately chosen. When I wanted a fresh Ubuntu Server install for testing, this happened to be the only SSD I had kicking around that wasn’t currently being used. I was going to be doing a lot of A/B testing on PCIE and NVMe devices, so installing Ubuntu 23.04 to a SATA SSD made sense to keep PCIE slots free.

Note that after testing, the main OS was to be run on a Samsung SSD 970 EVO Plus 500GB NVMe. Not much to say except that Samsung stuff tends to reliably go into low power modes.

Having used both drives, I can’t measure any power difference between them in my testing. Tom’s Hardware tested the Samsung idle at 0.072 watts (via ASPM/APST), and Anandtech tested the Sandisk Ultra 3D idle to be 0.056 watts (via ALPM). Both are well below the 1W resolution of my Kill-A-Watt meter.

PSU – Corsair RM750

As much as this 750W PSU may appear to be overkill for a system intended to sit around 10 watts, when 12 drive motors spin up at the same time, the instantaneous load is likely to be quite high. Seagate states 2A/3A DC/AC peak currents on the 12V rail for one of their 10TB 3.5″ drives. Even peak random read/writes can clock in at over 2A.

This bursty power demand has the potential to be problematic if the PSU isn’t up to the task. If an array of 6 drives collectively pull 150-200 watts at the same moment the CPU spikes to pull a peak 120W, that’s a jump from around 10 watts idle to around 400 watts. This could easily cause an instantaneous voltage dip – if it dips enough to cause an immediate crash/reboot it’s probably not a big deal, but if it dips just enough that data is corrupted during a memory refresh or when another drive is mid-write… that’s a more painful problem. Oversizing the PSU to some degree (or adding some in-line capacitors to the power rails) makes sense.

Fortunately, despite operating outside of the peak efficiency range, much of the Corsair RM series is pretty efficient across a wide range.

Power Measurements – Initial

A few important bits:

- Power measured from the wall

- Intel PowerTOP was used to auto-tune settings

- Ubuntu Server 23.04

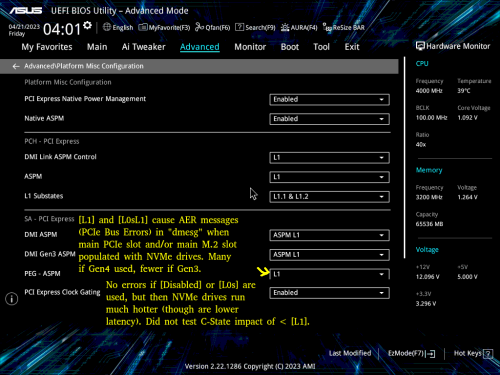

A few potentially-important BIOS bits:

- CPU C-states were enabled in the BIOS (C10)

- ASPM was enabled with everything set to L1

- RC6 (Render Standby) enabled

- Aggressive LPM Support enabled (ALPM)

- DISABLED: HD Audio, Connectivity Mode, LEDs, GNA Device, Serial Port

9-10 watts was the consumption when the display output was on.

7 watts was the consumption once the display turned off (consoleblank=600 kernel boot parameter for a 600s timer), which is where this system sits most of the week.

8 watts was the consumption if the USB keyboard power management was disabled. If you don’t SSH into the server from elsewhere, spending the extra watt for keyboard use might be necessary.

Problematic Power Measurements – Loaded up with spinning rust (spun-down)

As mentioned in the beginning, I started with 12 hard drives. Half were 2.5″ and the other half were 3.5″. Because the motherboard only has 4 SATA ports, a SATA controller and a port multiplier were used to handle the remaining drives. Additionally, 4 NVMe drives were used early on: one of them, a Western Digital SN770 had a tendency to get quite hot even at idle which indicates it probably wasn’t going into a low power mode.

With all the equipment connected, at idle, with display off, and with the 12 drives spun down to standby, I was shocked to see that my idle power consumption had gone from 7 watts all the way up to a whopping 24-25 watts. Far too much! Something was amiss.

Power Consumption Puzzles – High Power Investigation and Diagnosis

I disconnected the hard drives and started testing components one at a time. These were fairly crude tests meant to get a rough idea as to the culprit, so numbers here aren’t precise.

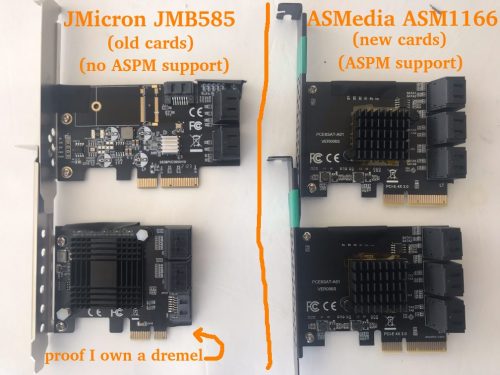

I quickly discovered that the JMB585 SATA controller I was using caused power consumption to increase by something in the 6-10 watt range (precise measurements in a later section). The controller itself is only supposed to take a couple watts, and the tiny heatsink stayed cool, so there was obviously more going on. Where was the power going?

I decided to watch the CPU package C-states. Without the JMB585 SATA controller, the system hit C6. When the JMB585 was reconnected, the best the system hit was C3. Ah ha! But why? Turns out that if a PCIE-connected device won’t go into ASPM L1, the CPU won’t go into as deep a sleep. The JMB585 controller cards don’t seem to have ASPM support.

A little further experimentation revealed something else that I hadn’t known, and it has to do with C6 vs C8. The system will only hit C8 if there’s nothing hooked up to the CPU-attached PCIE lanes. In other words, if anything is plugged in to the top PCIE slot or the top NVMe slot, C6 is the maximum. The power consumption difference between C6 and C8 *seemed* to be less than a watt in a simple test.

So while C8 would be a luxury, hitting C6 was a must. C3 uses too much power. If SATA controllers were going to prevent the CPU from hitting the best power saving states, I started to wonder whether I should have been looking for a motherboard with 6-8 SATA ports so that I wouldn’t have to rely on add-on controllers…

A little searching for SATA HBAs showed that while there aren’t many options here, the ASM1166 SATA controller should support ASPM L1, though the firmware has to be flashed for it to work properly (and to work at all on newer Intel boards). This was something I’d have to order: I have Marvel and JMicron spares, but they don’t support ASPM. I’d actually been avoiding ASMedia for years, but out of necessity they were now getting another chance: I ordered a couple ASM1166 6 port SATA controllers.

Aside: BadTLP, Bad! AER Bus Errors from the pcieport

Worth a mention… During initial testing with a WD Black SN770 (Gen4 NVMe), I found a problem when the primary (top CPU-attached) PCIE and NVMe ports were used. Running dmesg resulted in output littered with stuff like:

pcieport 0000:00:06.0: AER: Corrected error received: 0000:02:00.0

nvme 0000:02:00.0: PCIe Bus Error: severity=Corrected, type=Physical Layer, (Receiver ID)

pcieport 0000:00:06.0: PCIe Bus Error: severity=Corrected, type=Data Link Layer, (Transmitter ID)

pcieport 0000:00:06.0: AER: Error of this Agent is reported first

nvme 0000:02:00.0: [ 6] BadTLP

…after much trial-and-error I found that if the “PEG – ASPM” BIOS setting was set to [Disabled] or [L0s] there were no errors.

Of course, this was a bad option, as [L1] is crucial for power savings. If [L1] or [L0sL1] were used, the only option was to set the Link Speed of those ports to [Gen3], which didn’t stop the errors, but reduced them substantially.

Some research showed the root cause can be any number of things. Because swapping the motherboard or CPU wasn’t a pleasant thought, my best hope was swapping to a different brand of NVMe.

I ordered some Crucial P3 NVMe drives. This turned out to be a successful endeavor: with the WD drives replaced by the Crucial drives, I was no longer getting any errors, though keep in mind these are Gen3 drives.

Power Consumption Puzzles – Finding L1.1 and L1.2 to be enabled on chipset-connected ports only

When I had the 2 Crucial P3 NVMe drives installed in the CPU-connected PCIEx16 slot and the top M2 slot, I noticed higher idle temps than expected. While the NAND sat at about 27-29C, the controllers were reporting 49-50C – much higher than I expected for these particular drives.

I moved the one from the PCIEx16 slot to a chipset-connected PCIEx4 slot. An interesting difference between these drives showed up via lspci -vvv:

CPU-connected M2 slot: L1SubCtl1: PCI-PM_L1.2- PCI-PM_L1.1- ASPM_L1.2- ASPM_L1.1-

Chipset-connected PCIE slot: L1SubCtl1: PCI-PM_L1.2+ PCI-PM_L1.1+ ASPM_L1.2+ ASPM_L1.1+

L1 sub-states only seem to get enabled on the chipset-connected slots. Unfortunate, but it does seem to coincide with the available BIOS settings in the screenshot above.

Let’s reference that motherboard picture again to show the situation:

I put both NVMe drives on chipset-connected PCIE slots. Now both showed L1.1+/L1.2+ and both controller temps were down from the 49-50C range to 38-41C.

Unfortunately when attempting various A/B tests using these 2 Crucial NVMe drives with different slot configurations and various BIOS settings, I saw very inconsistent behavior in terms of temperature, though it’s worth noting the JMB585 and an NVMe boot drive were also connected during these tests. For example, both drives might idle at around 40C until a soft reboot at which point 1 (or both) might now idle at the 50C range. Sometimes it seemed possible to keep 1 drive on the CPU-connected M.2 and retain 40C temperatures on both drives as long as the x16 slot wasn’t populated. Presumably I was hitting some sort of bug. The Samsung boot NVMe seemed to keep a consistent idle temperature regardless of what was happening with the Crucial NVMe drives, so I suspected the Crucial drives themselves are at least partly to blame.

Interestingly, sometimes one (or both) controller temps would drop all the way down to the 29C range when on the chipset-connected slots. Since trying to find a low-power 4TB NVMe replacement for the Crucial P3 wasn’t a realistic goal, my best hope at this point was that the ASPM-incompatible JMicron JMB 585 was somehow to blame, since it was soon to be replaced with the ASPM-compatible ASMedia ASM 1166.

Late Update: I unfortunately didn’t keep track of temperatures throughout the rest of the testing, and heatsinks/airflow between drives have all been jumbled around. But for whatever it’s worth, In the final build, my Crucial P3 controller temps are 31-34C, and NAND temps are 23-24C.

Power Consumption Puzzles – Swapping from the JMB585 to the ASM1166.

After a couple weeks the ASM1166 arrived. First a couple bits regarding the card which you might find helpful if you’re considering it…

I began with a firmware flash – ASM1166 cards often have old firmware which doesn’t work with Intel 600-series motherboards and from what I understand can have issues with power management. Newer firmware can be found floating around in various places, but I decided to grab a copy from SilverStone (“fix compatibility issue” in the Download section of https://www.silverstonetek.com/en/product/info/expansion-cards/ECS06/) and followed the instructions at https://docs.phil-barker.com/posts/upgrading-ASM1166-firmware-for-unraid/ . Note that the SilverStone files had an identical MD5 to firmware I found by following the thread at https://forums.unraid.net/topic/102010-recommended-controllers-for-unraid/page/8/#comment-1185707 .

For anyone planning to purchase one of these ASMedia cards, I should note that like most SATA controllers and HBAs out there, the quality really varies. One of my cards had a heatsink that was on a bit crooked: the thermal pad was thick enough to prevent it from shorting nearby components, but be aware that these products can be really hit-and-miss. This is one of the situations where paying a little more to buy from somewhere with a good return policy can be prudent.

I did quite a bit of A/B testing, so here is a quick “JMicron JMB585 vs ASMedia ASM1166” in terms of total system power consumption, though it may only be applicable to this platform (or perhaps even this specific motherboard).

DRIVELESS

First, power consumption without any drives connected to the cards (the SATA SSD boot drive is connected to the motherboard) to get a baseline. PowerTOP used on all devices except for the keyboard (adding +1 watt). Measurements after the display output went to sleep.

- 8 watts – No SATA controller – C8 power state

- 9 watts – ASM1166 on a chipset-connected x4 slot – C8 power state

- 12 watts – JMB585 on the CPU-connected x16 slot – C3 power state

- 15 watts – JMB585 on a chipset-connected x4 slot – C3 power state

- 22 watts – ASM1166 on the CPU-connected x16 slot – C2 power state

The ASM1166 does well here if plugged into a chipset-connected slot (only +1 watt), but does horribly if connected to the main PCI-E slot (+14 watts) where the CPU package power state plummets to C2. Shockingly, the JMB585 behaves in an opposite manner where it’s consumption is lower on the CPU-connected slot (and it didn’t cause C2) – however, you’ll soon see that things change when drives are actually connected…

I did additional testing with the controllers, including playing “musical chairs” with a couple NVMe drives to see if multiple devices would throw a wrench into things, but nothing unexpected took place so I’ll skip those details.

ADDING DRIVES

With baseline measurements complete, next it was time to actually put some drives on these controllers. The SATA SSD boot drive stayed on the motherboard, 2 NVMe drives were added to the mix (chipset-connected unless otherwise noted), and 4 of the 2.5″ SATA hard drives were placed on the controller. I’ll list the “spun down” consumption after the hard drives went into standby – “spun up” was exactly 2 watts higher in every test while the drives were idle.

- 10 watts – ASM1166 on a chipset-connected x4 slot – C8 power state

- 11 watts – ASM1166 on a chipset-connected x4 slot with 1 NVMe moved to the CPU-connected x16 slot – C6 power state

- 11 watts – 2x ASM1166 on chipset-connected x4 slots, with only 1 NVMe drive – C8 power state

- 16 watts – JMB585 on a chipset-connected x4 slot – C3 power state

- 24 watts – JMB585 on CPU-connected x16 slot – C2 power state

With 4 drives connected via a chipset-connected slot, the ASM1166 adds +2 watts to system power consumption, whereas the JMB585 adds +8 watts. No contest.

An additional benefit is that I was able to use both of the ASM1166 cards in the system, whereas attempting to use both of my JMB575 cards at the same time resulted in the system refusing to boot, though that could be a platform or motherboard-specific issue.

There is a trade-off though – I always found the JMB585 to be rock-solid reliable, including when paired with a JMB575 port multiplier. My past experience with ASMedia SATA controllers has been less than stellar: reliability with the ASM1166 remains to be seen, but at the very least it’s a bad candidate for a port multiplier since it doesn’t support FBS (only CBS).

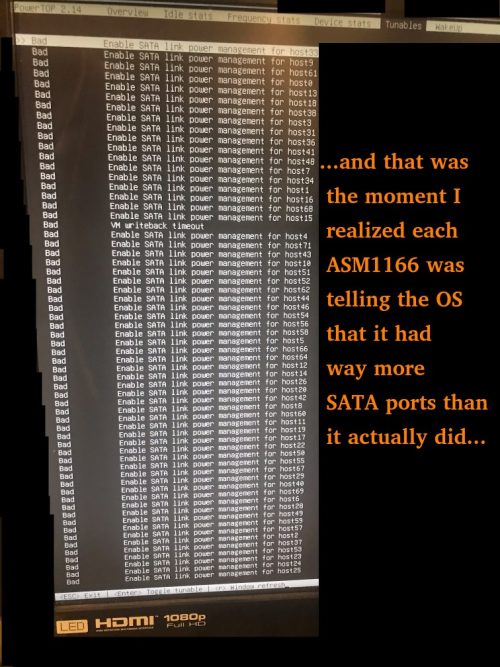

A couple other minor hiccups that presented with the ASM1166:

- When removing/reinserting the NVMe boot drive, a BIOS message appeared claiming that it couldn’t boot due to GPT corruption. The ASM1166 cards had to be temporarily removed for the BIOS to “find” the NVMe boot drive again (after which they could be reinstalled).

- The ASM1166 cards claim to have a *lot* of ports – this causes additional boot time as Linux has to iterate through all of them.

Update: SATA and SSD Brands

One of the comments mentioned an older Samsung 840 PRO SSD limiting to C3 whereas a Crucial Force GT SSD allowed C8. While those are older drives, I still found this a bit surprising. It was worth investigating.

I used the H770 as a testbed with a Samsung 850 EVO SATA SSD boot drive along with a Crucial P3 NVMe and built a custom kernel to allow the Realtek network adapter to reach L1.2. No ASM1166, just using the Intel onboard SATA. I reached C10 after running powertop with auto-tune and allowing the display to sleep. I tried various drives I have on hand, powering off the system each time to swap drives and repeat the process. Here were the results.

Drives that resulted in the system being stuck at C6:

- 1TB Patriot P210 SATA SSD

Drives that allowed C10:

- 500GB Samsung 850 EVO SATA SSD

- 4TB 2.5″ Seagate SATA HDD

- 8TB 3.5″ Seagate SATA HDD

- 14TB Toshiba SATA HDD

- 1TB Sandisk Ultra 3D SATA SSD

- 4TB Sandisk Ultra 3D SATA SSD (note: slow trim)

- 4TB Crucial MX500

I suggest being cautious when selecting SATA SSD brands and models. I’ll try to update this list over time with drives I’ve tested, but keep in mind certain manufacturers in the storage space have shown a propensity towards silently swapping to inferior components in some of of their mainline products, so you should always verify the claimed performance metrics of any storage devices you buy while within your return window. Feel free to leave a comment with good/bad drives you come across.

Power Consumption Puzzles – Conclusion

A few important bits if aiming for low consumption:

1) Motherboard support and BIOS configuration are critical – I’ve had motherboards with very inflexible BIOS’s. On this one, “Native ASPM” and the appropriate L1 states must be enabled (to allow OS-controlled instead of BIOS-controlled) for low power consumption to work.

2) Devices all need to support ASPM L1. Otherwise you’re really rolling the dice. The hardest part here as you might have guessed is finding SATA controllers that support it – if possible, get a motherboard enough sufficient Intel chipset-connected SATA ports to avoid needing a separate card. I should note that finding NVMe drives that have working low-power APST power states under ASPM isn’t always a given and you’ll want to do some research there too.

3) If you can hit the C8 power state, avoid using CPU-attached PCIe lanes (top PCIe and M2 slot). On this specific motherboard, my advice would be to avoiding using them altogether if you can, unless you either need the low-latency full-bandwidth path to the CPU or your devices are so active they never sleep anyway. Recall that BOTH my JMicron and ASMedia SATA cards caused the CPU Package C-State to plummet to C2 if plugged into the x16 PCI-E slot.

4) Measuring power from the wall is the only way to make sure that what you *think* is happening is actually happening. A Kill-A-Watt device will pay for itself over time if you use it – consider that I bought mine in 2006 ($16USD + $14USD shipping at the time through eBay). At that time I found our rarely-used fax machine which was always powered on used 7 watts… just keeping that one device powered off when unused during the next 10 years more than paid for the Kill-A-Watt.

Power Consumption when loaded up with a bunch of HDDs

Now that a variety of parts have moved in-and-out of the system throughout this process, the current setup is as follows:

- 1x Samsung 970 EVO Plus NVMe (500GB boot drive)

- 2x Crucial P3 NVMe (4TB each)

- 5x Seagate 2.5″ HDD (5TB each – 4TB utilized)

- 6x Seagate 3.5″ HDD (10TB each – 8TB utilized)

- 2x ASM1166 cards providing SATA ports

Total power measured from the wall (display on, keyboard enabled):

- 50 watts with all 11 HDD in active-idle

- 38 watts with the 6x 3.5″ HDD in Idle B

- 34 watts with the 6x 3.5″ HDD in Idle C

- 21 watts with the 6x 3.5″ HDD in Standby_Z (spun down)

- 18 watts with the 5x 2.5″ HDD ALSO in Standby

- 16 watts with the display output ALSO off

- 15 watts when PowerTOP is allowed to disable the USB Keyboard

Seagate rates standby consumption of these 3.5″ drives at about 0.8w each, and the 2.5″ drives at about 0.18w each. This lines up with what I’m seeing above. My active-idle numbers actually match up pretty well to Seagate specs too.

The obvious observation: compared to the rest of the system components, the 3.5″ drives are power-hungry monsters.

The HDDs will eventually be replaced with SSDs. With idle consumption as low as it is during HDD standby, there isn’t a major rush and this process will gradually take place as my HDD drives/spares fail and SSD prices fall.

The plan for “end game” is for an all-SSD build. Originally the plan was for 1 boot drive, 6xNVMe (likely Crucial P3 4TB) for a RAIDZ2 array, and 6xSATA (likely Samsung 870 QVO 8TB) for the 2nd RAIDZ2 array. Since using the CPU-connected M2/PCIe slots not only brings unpredictability but also comes at a slight C-state/power/temperature cost, I might alter that plan and give up a couple NVMe in the first array and use SATA instead so that I don’t have to touch CPU-connected lanes. Time will tell.

Unnecessary Storage Details

This part is only worth reading if you’re interested in meticulous details about the storage. Feel free to skip to the final section otherwise.

NVMe boot drive

As alluded to earlier, this is a Samsung 970 EVO Plus. Currently less than 4GB of the 500GB space is used (a 64GB swap partition exists but always sits at 0 used). It was originally chosen because Samsung had developed a reputation for reliability (which has been falling by the wayside lately), and Samsung also scored well in reviews every time it came to idle power consumption. This drive is almost always idle and both Controller and NAND temps stayed low throughout all testing (20-24C). It may eventually be swapped to a SATA SSD to free up an NVMe port.

2.5″ HDD

These drives are used for the primary 6-drive ZFS RAIDZ2 array – the one that gets the most use. One day a week it’s busy with a task that involves reading a few TB over the course of 24 hours. Usage through the rest of the week is sporadic, and the drives spend most of the week spun down. For anyone wondering why piddly 2.5″ drives are used instead of 3.5″ drives, there *is* a reason: power consumption.

Power consumption of the 2.5″ Seagate drives is honestly pretty impressive. Spun down they’re each rated at 0.18w, in low power idle they’re rated at 0.85w, and the read/write averages are rated at about 2w. There are plenty of SSDs out there with worse power consumption numbers than this spinning rust. 5TB capacity gives a lot of storage-per-watt.

The major downsides to these 2.5″ Seagate drives are:

- Not great performers. 80-120MB/s peak read/write. To be fair though, many TLC/QLC SSDs fall to these write levels when their SLC cache is exhausted.

- SMR (Shingled Magnetic recording). Reads are fine, but write performance absolutely plummets when random writes take place – it acts like a QLC SSD without an SLC cache that also doesn’t have TRIM.

- Low rated workload (55TB/year vs 550TB/year for 3.5″ Exos drives).

- No configurable error recovery time (SCT ERC), and these drives can hang for minutes if they hit an error while they relentlessly try to re-read the problematic sector. Ubuntu needs to be configured to wait instead of trying to reset the drive after 30 seconds.

- Higher error rates if they heat up (I’ve had to replace a few and have discovered they don’t like running hot).

- Typical HDD pain points (slow to spin up, etc).

To be absolutely fair to Seagate, these are sold as external USB backup drives. Pulling these 15mm tall drives out of the enclosures and using them as RAID members in a NAS isn’t exactly using them as intended. The ultra low power consumption is tremendous, but there are obvious trade-offs.

Long term, these 2.5″ 4/5TB drives will slowly be replaced by 4TB SSD drives (possibly all NVMe). SSDs in 4TB capacity started to become available on the consumer end in 2021/2022 at about 4-5x the cost of the spinners. Less than 2 years later they’ve dropped to about 2x the cost, and I expect decent brands to last more than 2x as long as the Seagate spinners.

If availability of the Crucial P3 (Gen3) model remains, I’ll likely keep with this model despite being limited to Gen3 speeds. I strongly considered the Crucial P3 Plus (Gen4), but power consumption in reviews was higher despite very few situations where performance was notably higher as well. My biggest concern with the P3 Plus (Gen4) was that if I had issues with ASPM/APST, Tom’s Hardware showed it with a 0.3w idle power premium over the P3 (Gen3) for the 2TB model. I prefer “worst-case scenario” power to be as low as possible.

3.5″ HDD

Used in the secondary 6-drive RAIDZ2 array – a backup array that’s spun up for about 2 hours a week where it receives constant heavy writes.

Power consumption of the 3.5″ Seagate drives is about what you’d expect. These 10TB drives are rated at about 0.8w each in standby, 2-5w idle, and 6-9w reading and writing.

Two concerns here:

- These are rated to collectively pull about 45-50 watts when writing. That’s a bit of extra UPS load I don’t really want if a lengthy power outage takes place during the backups (I stick with consumer 1500 watt UPS’s).

- These are rated to collectively pull about 4.8 watts when in standby. Again, some UPS load I wouldn’t mind shaving off.

Long-term these drives will likely be replaced by Samsung 870 QVO 8TB SATA drives. The 870 QVO sports 0.041w/0.046w idle with ALPM, 0.224w/0.229w idle without, and 2.0-2.7w during a copy (according to Toms/Anandtech).

Price-wise, the Samsung 8TB SATA SSD is currently a fair bit more expensive than 8TB spinners (closer to 3x the cost) so unless these drives start to see more frequent use for some reason, replacement with the SSDs will almost certainly wait until I’ve run out of spares.

NVMe Cache Drive

Replacing my spinning rust with SSDs is a process that will likely take a while.

In the meantime, ZFS has a couple options to make use of high-speed storage (typically SSD) in front of slower storage:

- “Special” Allocation Class – allows you to create a vdev specifically for metadata and for “small” blocks if desired.

- A cache drive, known commonly as an L2ARC.

If you create the “special” vdev at pool creation, all your metadata (and optionally, small blocks of a size you choose) will go on the “special” vdev instead of your spinning rust. Very fast file listings and directory traversal whilst keeping the spinning rust for the files themselves. Yes, you can “ls” a bunch of directories without waking your HDDs from sleep. Biggest downside is that because all your metadata is on this vdev, if it ever dies, access to all your data is essentially gone. So it really should be at least mirrored. Maybe even a 3-way mirror. Say goodbye to a few ports.

The L2ARC is a bit different. It’s essentially a level 2 cache. When the cache in RAM gets full, ZFS will copy some of the blocks to the L2ARC before it evicts that content from RAM. The next time that data needs to be accessed, it’ll be read from the L2ARC instead of the disk. One benefit compared to the “special” vdev is that you’re fine with only 1 SSD – if there’s a problem with the data in the L2ARC (bad checksum, drive dies, etc), ZFS will just read the content from the original disk. Also, once the L2ARC is full, ZFS will just start again at the beginning of the L2ARC SSD and overwrite stuff it wrote before which has some pros (old data never accessed anymore) and cons (data that was frequently accessed and will need to get written to the L2ARC again). You can also add/remove L2ARC devices from the pool at your leisure – want to add a 64GB SSD, 500GB SSD, and 2TB SSD? Go right ahead – ZFS will distribute blocks among them. Need to remove the 500GB SSD from the pool a few days later and use it elsewhere? Go right ahead. The biggest downside to the L2ARC is that if you forget to specify “cache” when adding the device, you probably mucked up your pool. It’s also imperfect: even with careful tuning it’s hard to get ZFS to write EVERYTHING you want to the L2ARC before it gets evicted from memory. At the same time, depending on your data, the L2ARC may see a lot of writes, and you may have to carefully watch the health of your SSD.

In the past I’ve used the “special”, used L2ARC, and have used both at the same time (you can even tell the L2ARC not to cache things already contained in the “special” vdev).

This time around I simply went with an L2ARC on a 4TB NVMe: once all the other 2.5″ drives have been replaced by SSD and the speed benefits of an SSD cache no longer apply, I can simply remove this cache device (though theoretically having 1 L2ARC cache drive handling the bulk of reads *would* allow the other NVMe drives to stay in low power mode more…).

Conclusion – Regrets? Second-guessing? What could have gone differently?

Unlike the ASRock J4005 build where I realized part way through that I’d kneecapped myself in a number of ways, I don’t get the same sense here. This time I ended up with low idle power AND a pretty capable system that should be flexible even if repurposed in the future.

I’m quite happy with my component choices, though I’d be curious to know how the MSI PRO Z790-P DDR4 (one of the other motherboards I considered) would do in comparison. Functionality-wise the MSI has the advantage of 6xSATA ports, but it comes with the obvious downside of the notorious Intel 2.5G networking chip. The MSI also has a PS/2 port and I’ve never actually checked to see if PS/2 keyboard power consumption is lower than USB (recall that I save 1 watt if I allow powertop to shut down the USB keyboard port). And of course it would be interesting to compare the ASPM and ALPM settings, and to see if the snags I hit with CPU-attached PCIe/M.2 slots exist in the same way.

While this system currently sits in the 15-16 watt range when idle with drives in standby, once all HDDs are replaced with SSDs, I’d expect idle consumption of around 10-11 watts which isn’t bad for 72TB worth of drives, 64GB of RAM, and a pretty decent processor.

Update: Recent Linux kernels disable the L1 power saving modes of most Realtek NICs which prevents the CPU from entering decent C-states, thus increasing power consumption by quite a lot. While there are workarounds, moving forward I’ll likely limit myself to motherboards containing Intel 1 Gigabit network adapters (perhaps moving to Intel 2.5 Gigabit when it becomes clear they’ve worked out all the kinks). You can find further details about the Realtek NIC situation in the comments below. The script I mention deep in the comments can be found at: RTL8125-ASPM.sh.txt .

I guess a lot of headaches could have been avoided if you found a board with more sata ports on it!

On my end I never managed to get my chip beyond C3. I purposefully tried to reduce the amount of excess components (like those SATA controllers, I'd read about how hit and miss they could be).

I'll double check my BIOS settings to make sure that I've enabled all the relevant things you mentioned in your piece.

Very interesting article, many thanks.

So you don’t care about ECC, some say it’s a must for an always on server especially with ZFS.

Also NVME’s seem to burn more fuel than SSD’s.

I am looking for a frugal ECC motherboard but did not find anything yet, W680 boards are hard to get.

In the meantime I am running Unraid on a J5040 Asrock board with two 1TB SSD’s in mirror and 3 mechanical WD’S that are sleeping most of the time.

The system burns 19 watt at idle, its was 16-17 watt (C6) before adding an Asmedia Controller (4). I will replace the old seasonic PSU by Corsair soon.

Regards

Geert

Keep in mind that if the 2nd M.2 slot is populated, only 5 of the 6 SATA ports will work. If I recall correctly the BIOS on the H470M DS3H also hid a few options (like forcing IGPU or dedicated GPU) unless put in CSM mode. Additionally it would randomly hang on the boot screen with an error if I had an LSI SAS HBA installed, necessitating another restart attempt - regular SATA controllers worked fine though. Putting aside those weird little nuances, I found it to be reliable and it worked great and I quite like the board.

Do you plan to publish a similar article but for a system with ECC support and low idle power consumption that would still be compatible with Linux (I think low idle power consumption from AMD is not the best for Linux as an example).

I am planning to make such a build soon myself and I would like to know if I should start making my build in the next month or two or maybe wait a bit to read your publications which would provide some useful in sites that can help me make a better educated decisions on the components choice.

Nevertheless, thank you very much for the thoroughly written article. You did an impressive job here highlighting the important parts of building a very efficient low power NAS.

In any case, best of luck with your build!

Thanks for your very detailed and informative NAS articles!

I'm putting mine together with an i3-10500T, 16GB and 4xHDD.

For the power supply, I found an Antec EarthWatts 380W EA-380D (80+ Bronze) reconditioned for 25€. Is it worth it in your opinion? or is it too old?

If you have another model to recommend, I'd love to hear from you. Thanks in advance.

I also got a 970 Evo Plus (2TB) as the boot drive and can confirm that it must be connected to the chipset in order to reach low package C-States (C8). What I found interesting is that the difference between package C3 and C8 was much bigger when then SSD is connected to the chipset. I believe that's because the chipset itself will only go into deep sleep states when all attached devices support ASPM and SATA Link Power Management is active.

Connecting the SSD to the CPU PCIe did only increase power consumption by ~2W (Package C3 vs C8), while having not having ASPM on a device connected to the chipset seems to take additional 5W just for the chipset but has the same effect (C3) to the package C-State.

One interesting thing worth noting is that I have a PCIe 1.1 DVB-C Capture card connected to the chipset. Even though ASPM is listed as a capability for the card by lspci and I booted the kernel with pcie_aspm=force it didn't get enabled for that card. I had to force-enable ASPM via setpci, see https://wireless.wiki.kernel.org/en/users/documentation/aspm - seems to work without issues. That helped me reaching that 15W idle power. Unfortunately the capture card still takes ~5W, otherwise I currently only have 2x4TB HDD from Toshiba connected which spin down when idle.

Btw. Sata Hot Plug must be disabled for all ports, or otherwise the package will only reach C6.

1) it looks like that it is better to use a CPU with an embedded GPU unless the motherboard supports booting without GPU. I was planning to reduce power usage just by removing the discrete GPU card (after system installation) but this motherboard hangs at boot if there is no GPU connected! By googling around I found that this condition is quite typical for consumer motherboards. Looking at xblax's numbers, he can reach better idle power levels (ok, the motherboard is not exactly the same...).

2) I can reach C10 state for all the cores (right-most columns in powertop) but the package C-state reached at idle depends on which PCIe slot is used for the discrete GPU. By using the first PCI slot served by the CPU lanes I cannot go beyond package C2 state for 99% of time: in this configuration the average mains power is stably below 26W. If I move the GPU to one of the other slots served by the B760 chipset lanes, the package C-state reaches about 7% (C2) and 91% (C3), the average power drops below 22W but it is less stable

Is there any chance to improve the result or is it better to buy the 12100 CPU (with GPU)?

sh -c "echo 1 > /sys/bus/pci/devices/0000:01:00.0/link/l1_aspm"

(you'll need to replace 0000:01:00.0 with whatever coresponds with your Realtek card, from lspci -vv)

If you try it, can you please let us know on what C State and Watt usage you go to? :)

Also, did you swap to an iGPU and remove the old GPU?

I saw that also someone with an MSI PRO B760M-A WIFI DDR4 (which seems to be the same with the ASUS one), has the same issue, also the same with Asrock B760M Pro RS/D4

I was able to reach around 13-14W with that board by using ASPM L1 on the 2.5Gbit port, all the BIOS settings and "powertop --auto-tune"

No USB / screen attached, only CPU (12600) and 1 Samsung 980 Pro Nvme in a chipset slot, proxmox running in idle.

However I am using a Be quiet! Dark Power Pro 11 currently, which I guess isn't very power efficient at those lower loads. I am thinking about looking for a used Corsair RM750(/x)/RM650(/x).

You can look up a good test on tweakpc.de

Super great article, thanks for all these informations.

I’m planning building my nas. As the power consumption is the main topic, what do you think about the following build (but I’m kinda a noob about the system and what’s possible and/or the limitation of such a low tdp chip) ?

Asrock N100M micro ATX (with the new Intel® Quad-Core Processor N100 (up to 3.4 GHz) with a 6W tdp. As there are only 2 sata ports, the idea is to add a SAS HBA card with 8 additional SATA ports on the 1 x PCIe 3.0 x16 Slot. For the storage it would be 1 M2 (the one from the motherboard) for TrueNas OS, 2 SSD mirroring sata for VM, docker, … and 8 HDD Seagate EXO 7200 rpm drives as a final step (2 at the beginning and then evolving based on the need).

For the power supply, a Seasonic Focus PX 550W - Modular 80+ Platinum ATX and finally a unique stick of 32GB of ram (non ECC).

Many thanks in advance

On the SAS HBA card, I'd suggest looking around to see what idle power consumption others are seeing on the specific card you're considering: the popular ones often pull a few watts while doing absolutely nothing. Not sure how *BSD handles the cards, but of the few that seem to have ASPM enabled by default, Linux eventually seems to disable it in the kernel at some point due to issues. That said, this is a situation where the ASRock N100 might fare better than a separate CPU/motherboard combo as I'd expect it to be less sensitive to c-state implications of an expansion card, though this is just a guess based on what I saw with my ASRock J4x05 boards and may not apply to N100.

The Seasonic PX 550W looks like a great choice.

Overall looks like a solid build!

Do you have any tips for identifying motherboards that can achieve low power usage? People sometimes recommend ITX motherboards but I haven't found any measurements about how many watts ITX vs ATX usually saves. Now, ITX wouldn't have worked for this build, but ATX doesn't seem to have been a significant source of power consumption anyway. In general, it seems very hard to figure out which motherboards are power-efficient and which are not?

What do you mean with "the E-core silicon overhead" and why did you try to avoid it? I understand the CPUs with E-cores are probably more complex, but I would've thought that the E-cores could lead to lower power usage when the CPU is doing non-intensive tasks at low load.

Again, thanks for the great info. I hope to be able to build a system with similar power efficiency. Right now I have a Gigabyte Z790 UD AX motherboard and an i5-13500 system that I can not get below 28W.

The advantage to ITX is that it tends to limit the component count, but it's not strictly necessary - last week I actually repurposed the "Intel i3-10320 on a Gigabyte H470M DS3H" I mentioned at the beginning and got it down to 6 watts idle (headless, no keyboard, onboard Intel i219V 1GbE network only, c-states in BIOS, 3 Samsung SATA SSDs 840/860/870, Corsair RM850 power supply, Ubuntu Server with powertop). It's a very utilitarian motherboard. I won't do a separate write-up because the board is no longer available, but 6 watts on that MicroATX Gibabyte H470 board and 7 watts on the ATX ASUS H770 board in this write-up are my best 2 results so far and notably neither were ITX. Something else I just noticed: both these boards only have 6 power phases.

As to the "E-core silicon overhead", a lot of details can be found at https://www.hwcooling.net/en/the-same-and-yet-different-intel-core-i5-12400-duel-h0-vs-c0/ , but I'll try to summarize. The i5-12400 comes with 6 P-cores and 0 E-cores enabled, commonly referred to as 6+0. However, it came in 2 variants: a "C0" stepping which was originally an 8+8 that had cores fused off to become a 6+0, and an "H0" stepping which was manufactured directly as an 6+0 and never had any E-core hardware inside to begin with. In the tests (page 5 of that article), the C0 used up to 16 watts more power than the H0 depending on the benchmark, including almost 11 watts more at idle. Now it's always possible their C0 sample had other contributing issues causing power leakage, or that there's some other variable at play, but either way the 2 chips that had physical E-Cores hardware inside didn't fare well in the idle test.

Because I focus on extremely low idle consumption for most of my systems, I can't justify buying any of the combined P/E-core chips until I see some data that shows chips with E-cores doing under 10 watts idle. And I simply haven't yet. This is an area where Intel is very much under threat these days: the AMD Mini PCs are now getting down to about 6-7 watts idle power consumption for a Ryzen 9 7940HS ( https://youtu.be/l3Vaz7S3HmQ?t=610 ) and if AMD brings this type of APU design to the desktop side or someone like ASRock starts to package some of these impressive HS chips in a custom motherboard, Intel could quickly lose the low-idle-power market.

I can see that the motherboard I bought is probably not ideal (it advertises a lot of phases).

With that said, even if your board had a lot of inefficient MOSFETs, the 28 watt power consumption you said you're getting seems a bit high unless you've got some spinning rust or a PCIe card that guzzles power. Have you checked to see if you're hitting C6 or better power states? Recall that when I put the ASM1166 on the main PCIe slot I was limited to C2 and was consuming 22 watts.

You are indeed correct that the 28 W I shared was not as good as it could get. I made the mistake of thinking that unplugging the SATA cables from my HDDs would leave them powered off. As is obvious in hindsight, you also need to unplug them from the PSU. Additionally, I had a bunch of peripherals connected to the PC that I didn't realize would consume power (in particular a plugged-in monitor makes a big difference). After disconnecting all HDDs and all peripherals I get readings in the 8-10W range.

To hopefully make this a useful data point for others I'll share some more details. The CPU is an i5-13500 in a Gigabyte Z790 UD AX motherboard. The only thing connected is a SATA SSD and a single stick of memory. The PSU is a 850W Corsair RM850x. The system reaches C8 and even C10. A few more things could be done to reduce the power draw. I was measuring while idling in GNOME (I assume having no DE running will save a tiny bit of CPU), I have two CPU fans that are running slowly even at low temps, the system is on WiFi (I assume ethernet consumes less power), and I haven't disabled case LEDs nor HD Audio.

I'm now very happy with the level of power consumption. Perhaps, one takeaway is that Intel E-cores do not affect the idle power draw much, at least in this CPU. The only problem I have now is that the system is unstable and sporadically reboots 😭. I think I've narrowed the issue down to a faulty CPU or a faulty motherboard (I've tried replacing the PSU and memtest86+ says that the memory is fine). The company where I purchased the parts claims that both are fine, but unless I find another solution I'll try and replace the CPU and motherboard with some low-end parts: a 13th gen i3 and an Asus B760M-A motherboard. If that fixes the problem hopefully I'll be able to return the other parts, in the worst case I'll use the budget parts for a server and the higher-end parts for a workstation.

i have exactly same setup ( i5-13500 + Z790 UD AX), my boot drive is Samsung 990 pro 4TB

and i have exactly same problem - sporadic reboots. Have you managed to find what is causing it?

I've tried latest BIOS F9b as well as F5 and changing multiple BIOS settings, but so far nothing helps. My suspicion is that boot drive goes into some low power mode and as is unable to recover from it, but don't know how to prove it.

there are no events before the crash, i also have netconsole configured - still nothing logged.

With latest BIOS, default settings and 970 evo plus as boot device (no other disk attached) system seems stable, but unfortunately it draws 32W on average while idling which is not acceptable.

Currently i'm changing one setting at a time and waiting for 12h+ in order to figure out what is really causing this and that takes a lot of time.

I've tried all settings, once I enable c8/10 system will become unstable and shutdown at some point, I've checked all drives and cables, replaced psu twice, currently awaiting a cpu RMA from intel. Judging from this thread here it might seem this is a gigabyte issue, I've also tried varying BIOS firmwares without any luck, so it seems if a CPU RMA does not fix it, it would be an issue with Gigabyte z790 motherboards.

Disappointing for sure, as I am out of return window and really do not want to deal with Gigabyte RMA, so If CPU RMA doesnt work, I will just have to live with it or sell it off and buy a different board.

Since this thread is a little old (Dec 2023), I wonder if anyone else has had this issue and was able to resolve it.

Thank you.

I'm currently testing an ASUS TUF GAMING B760M-PLUS WIFI D4 (12+1 DrMos) and at idle, with the monitor and USB (mouse, keyboard) suspended, the power meter shows 6.7-8.1 W. The rest of the system:

- i5 13500

- 2 x 16 GB 3600 MHz (gear 1)

- 1 TB KC 3000

- RM550x 2021

- 2 x 120 mm fans @ 450 rpm

- audio codec on

- WiFi off

Arch Linux + RTL8125 module (my router does not support EEE)

With the Realtek card disabled, the power meter shows 6.4 - 6.7 W

PC states w/ LAN

C2 (pc2) 0,7%

C3 (pc3) 1,3%

C6 (pc6) 41,1%

C7 (pc7) 0,0%

C8 (pc8) 0,0%

C9 (pc9) 0,0%

C10 (pc10) 55,8%

PC states w/o LAN

C2 (pc2) 0,6%

C3 (pc3) 0,9%

C6 (pc6) 0,0%

C7 (pc7) 0,0%

C8 (pc8) 0,0%

C9 (pc9) 0,0%

C10 (pc10) 97,8%

I had similar results on a B660 AORUS MASTER DDR4 (16+1+1).

I recently bought an i3-13100 (4+0) and an i3-13500 (6+8) to test out the "E-core overhead" claims I've seen online. I'm happy to report that the power consumption at idle is identical for both of these chips! Perhaps the elevated power consumption issue is unique to the i5-12400 C0, unfortunately I don't have one on hand to test.

4W power loss

I'm currently running an off-lease quanta 1u that I grabbed off ebay just before covid hit and it's single-core performance is really showing its age. Also it idles at 80W >.<

I would like to share my experience with my new 12—14 W PC.

I just built fanless mini-ITX PC. Case is also a passive cooler - AKASA Maxwell Pro and inside is AMD Ryzen 5600G (Zen 3, 65W TDP), Gigabyte B550I AORUS PRO AX (bios FB), 1x 16GB DDR4 (I plan to upgrade to 2x32GB), 1x 2TB Samsung 980Pro m.2 SSD. It's powered by 12V AC/DC power supply from AKASA (max. 150W) and Inter-Tech MINI-ITX PSU 160 W.

12—14W idle power consumption for whole PC under Windows 10 (measured on DC side, power plan is balanced, but I enabled ASPM, Pstates and C-states in bios and PCIe power saving in advanced setting in windows Power plan).

Under load (Cinebench R23) 61—65W. Currently I'm doing undervolting to have better power consumption and temperatures.

----------

my small home-lab & NAS has under 2W idle power consumption ‼️

I recommend Odroid H3 (H3+) with BIOS 1.11 and Debian 11 + DietPi + kernel 6.0.0 (or newer) + applied tweaks via powertop it has idle power consumption only 1.2 — 1.5W (compared to 2.7W for RPi 4 - source) ⚡️(with my configuration: 1x 16GB RAM and 1x SATA SSD).

See: https://i.ibb.co/7QD390m/H3-1-10-6-0-0-sata-idle.gif

Max memory size is 64 GB RAM and it has 1x m.2 port, 2x SATA 3, and 2x LAN 2.5Gbps. It's much faster than Raspberry Pi 4 with lower power consumption in idle. In load it can consume 20W (+depends on connected devices).

If you need more SATA ports then m.2 port can be expanded to 5x SATA using this: https://wiki.odroid.com/odroid-h3/application_note/m.2_to_sata_adapter

I'm currently struggling with getting my new NAS to use less than 40W at idle with no data drives, and I can for the life of me not understand why it's using that much. My gaming desktop idles at less.

It's an Asrock H670M-ITX/ac with an i3-12100, 8GB RAM, be quiet 400W PSU. Originally used a Kingston NV2 NVMe for OS, but found that replacing it with a SATA SSD decreased idle power by about 10W (50W - > 40W).

According to powertop, cores get into C7 no problem, but package refuses to leave C2. Not sure how important that is.

I'll keep working at it, with your article as reference. :)

Matt,

Your articles inspired me so I purchased the same motherboard ( prime h770 plus d4 ) and similar processor etc. I was able to reach 12-14w minimum. Happy with that for now but the additional 8w due to the package not reaching c8 anymore has been very frustrating, have you seen anything similar in your build?

Several investigations have shown that it is a drive problem with RealTekLAN. If you download and install drivers for the 8125 chipset from the Realtech site, you can reach c10 as before.

you should consider a different PSU like the Corsair RM550x (2021) or BeQuiet 12M 550W. The Corsair is the best for low power setups, but extremely hard to get. It will reduce the power consumption even further (2 to 4 watts).

This and other tweaks are mentioned in this topic:

https://forums.unraid.net/topic/98070-reduce-power-consumption-with-powertop/

Sadly, this was an intentional change to the Realtek driver contained in the kernel. It ensures that L1 is disabled on virtually every Realtek adapter, seemingly in response to a number of Realtek adapters experiencing stability issues when these low power states are enabled. On my current test system, this results in a 4 watt power increase at idle with the screen slept as the system no longer goes beyond C3.

Trying the Realtek driver that was linked by Tseting is likely to be the easiest workaround for now, though I have no idea how it will fare on unsupported kernel versions and I'm personally not a huge fan of kernel modules. I'll paste it here for convenience: https://www.realtek.com/en/component/zoo/category/network-interface-controllers-10-100-1000m-gigabit-ethernet-pci-express-software

There is of course a harder workaround - for those familiar with compiling the kernel, reverting the change in the diff above will restore L1/L1.1 on RTL8168h/8111h, RTL8107e, RTL8168ep/8111ep, RTL8168fp/RTL8117, and RTL8125A/B devices (anything older already had it disabled) - if you want to allow L1.2 you can force rtl_aspm_is_safe() to return true, though on my test system it didn't provide any benefit over L1.1.

Unless the kernel devs have a change of heart here, it looks like Intel NICs may be the only viable option moving forward. Intel 1G NICs have generally been very solid. Worryingly, I found that disabling the Realtek NIC on my MSI board doesn't completely detach it (still stuck at C3) so buying a board with a Realtek NIC with plans to disable it and use an Intel network expansion card may be risky. Worth noting that moving forward there is a flag vendors can set on an 8125A/B adapter to indicate L1.2 is tested and allowed which the linux kernel will respect, but I don't know if it's made it into any motherboards or expansion cards.

thanks for the detailed information you shared.

Your setup has inspired me and I have purchased the "Prime h770-plus" including 4800 DDR Ram.

Unfortunately I also have problems with NVME SSD's when aspm is enabled in the bios (and PCI express clock gating). The workarounds to block certain power saving modes of the WD's did not help. Tried the SN750 (1tb) and the SN850X(2tb).

Can you still recommend the Crucial P3 or does it also regularly fail with reference to ASPM problems?

Who has what other NVME on the board running reliably with ASPM enabled?

Do you run the setup productively in continuous operation?

Additionally, I have found that an 840pro (256gb) at least on SATA1 prevents the system from going lower than C3. A Crusial Force GT (128GB), on the other hand, works up to C8.

I got around the problem with the Realtek NIC by removing the check in the kernel.

Thank you and best regards

I have now tried a Samsung 990 pro. Unfortunately this still leads to the already known nvme aspm errors.

Does anyone else have any ideas?

It can't really be the power supply (I would find that very strange), because a Corsair cx 750 is being used here temporarily.

If nothing helps, does anyone have a good alternative board?

Somehow I put my foot in my mouth too often when it comes to hardware ;-(.

Itself it is a REALLY efficient nvme drive for just a few bucks. But what makes it excellent for our use is its capability to let any cpu run down to c10 with a few bios settings.

"I guess a lot of headaches could have been avoided if you found a board with more sata ports on it!"

That's not true by default - because it comes down to what SATA-Controller is used and/or how these Ports are connected. Chances are high that its using an onboard controller, and specifically for the N5105 there's a wide known NAS-Variant with 6-SATA Ports floating around sold by Kingnovy and Topton.

The Black one uses JMS585 and the Green PCB one uses ASM1166 - black is stuck with C3 and Green can go down to C8 (verified myself because I do have the green variant). If I would need anything more then a backup server, then I'd go the route here - with an much more powerfull Intel on LGA1700.

A good example of how low you could go with idle power consumption is the Odroid H3 - <2W idle with 2x HDDs via SATA in Spindown ... however as soon as you add (the wrong) components that will accelerate quickly - check the power consumption stats here:

https://github.com/fenio/ugly-nas

TLDR: In the end its sipping more power then your build here - and I have to add that I previously owned an Odroid H2, which fried their 5V lane and pulled the (expensive) SSDs with it ... every since then I'm staying away for the absolute maximum of the lowest power consumption in exotic combinations like the Odroid H3.

I'd say in the end it all comes down to - how practical everything is vs the power consumption levels.

That said I'm impressed with this build here - 7W idle with a 750W PSU is quite something.

I am currently upgrading my home server from j3455 to 11400.

While switching hardware I found a strange issue:

I5-11400+2x8Gb 2666 ddr4 +ASUS Prime B560M-A (latest bios) +asm1166.

If I boot from USB without any sata drives attached package C state reaches c8.

If I boot from USB with a SATA drive attached to the onboard sata controller package c state only reaches C2 (+4W).

If I boot from USB with a SATA drive attached to an pcie asm1166 package c state reaches c8.

So to me it seems the b560 SATA controller seems to have problems with power saving. Even if I have L1 enabled for everything and ran powertop tune it will not go below c2.

Do you have an idea what could cause the b560 SATA controller cause 4w more?

In the end it could be cheaper longtime to add another ASM1166 instead of the onboard controller. :D

This is enabled, I also tried with disabled, but it did not change power consumption or C-States. (leaving it enabled for now)

In the BIOS I am able to specifiy if M.2_2 is using SATA or PCIE. According to BIOS and Manual, SATA6G_2 is only blocked if M.2_2 is set to SATA.

But I have connected the ASM1166 in M.2_2 and configured it as PCIE. I confirmed that all onboard SATA ports work as expected with this setting.

Hotplug is disabled for all Ports by default. I enabled it to see if it changes something, but it did not. Leaving it on Disabled for now.

I booted from USB and tried different devices: 2x SSDs (Emtec and older Samsung), 2x 3.5" HDDs (WD and Seagate) and even 1x LG DVD Burner.

It seems that it doesnt matter what kind of deviecs is attached.

It is always the same, as soon as I connect a devices over SATA onboard C2 is maximum.

To verify this I booted from USB Stick with SATA devices attached, and then unplugged all of them while booted.

As soon as the last sata device is physically disconnected from the live system it will go to pc6 immediately and pc8 short after.

When reconnecting all SATA devices it stays in PC6/8 but also dmesg does not recognize the replug (most likely because of hotplug disabled)

I will crawl through dmesg boot logs, maybe something interesting pops up.

-m.2 slot of mobo works(tested with m.2 ssd)

-sata controller works (tested with m.2-pcie adapter&x16 slot of the mobo and m.2 slot of another minipc)

But with asm1166 connected to m.2 slot, nothing show up with bios or lspci.

So it looks like asrock board have some problem.

Don't know how to proceed from further

https://youtu.be/-DSTOUOhlc0?t=254

Also, one thing I don't understand in general, if I use the home server as a NAS but also as my router, would that prevent reaching high c states at idle given that it would always have to do some work? I have a gigabit connection and I am currently using openwrt on a pi4 but with qos enable I can get half download speed.

Thanks again.

As for being a router, I'm not sure although it's something I hope to test out within the next couple weeks. Currently I've got the ole ASRock/Intel J4005B-ITX running OpenWRT here as a router but the motherboard limit on it is C6 (though it spends 88-92% of time there after powertop with typical home traffic including a YouTube stream I tested just now). The thing's powered by one of my old Antec PSUs, and it reliably sits at a constant 10 watts.

EDIT/UPDATE Dec 13: Just to follow up, I did a bit of testing with the Asus H770 + i3-12100 + Intel i350-4T running OpenWRT in a systemd-nspawn container running privileged with SYSTEMD_SECCOMP=0. Results are a bit messy.

Doing nothing, it would be in C10 90-95% of the time.

TEST A: Handling just 1 device watching YouTube, 90% C10. Connected to very light household traffic (spiky with under 3Mbit/s average) it sat in C10 for 80-90% of the time. Downloading from one of my web servers which caps the rate at 4MB/s (roughly 32Mbit/s) it dropped to around 50-60% range in C10.

TEST B: Running iperf tests from an exterior VPS to a local machine (forwarding a port):

- 0Mbps = approx 82% C10 (household traffic only)

- 1Mbps = approx 73% C10

- 5Mbps = approx 61% C10

- 10Mbps = approx 58% C10

- 20Mbps = approx 30% C10

- 30Mbps = approx 12% C10

...it hit 0 at just over 40Mbps.

TEST C: Interestingly, passing through the router between 2 local networks (no NAT, just forwarding) offered different results:

- 0Mbps = approx 82% C10 (household traffic only)

- 1Mbps = approx 82% C10

- 5Mbps = approx 80% C10

- 10Mbps = approx 74% C10

- 20Mbps = approx 70% C10

- 30Mbps = approx 64% C10

...it hit 0 at just over 70Mbps.

Since I'm in an nspawn container, I wasn't able to try software flow offloading in the firewall section of OpenWRT to see if it would soften the impact in tests A and B - it's quite possible that the "flow offloading" it does would bring the results closer to test C. Also possible that IPv6 might do better in tests A and B by skipping NAT, though the impact of smaller-but-more packets could always throw a wrench into things.

So far as I can tell, the takeaway here is that there's some degree of traffic the computer can handle as a firewall while still finding opportunities to sleep. But if it's being kept awake... other options start to look enticing too. I just tested the Intel J4005 again (plugged into nothing but a monitor) and it sits at 9-10W in OpenWRT even if C-States are disabled, and I suspect the J5xxx series would be similar (no idea about N100). If some oomph is needed, my Ryzen 5600G does 22-23W on a Ubuntu LiveDVD with C-states disabled. Both of those start to look equally attractive any time Alder Lake loses it's C-State advantage in my view.

EDIT/UPDATE Dec 15: Bare metal, configured Debian as a router. TEST B was nearly identical except time in C10 was +6% for each item - still hit the hard wall just over 40Mbps. Flow tables didn't help.

Test C fared a bit better, with the final numbers being:

- 70Mbps = approx 70% C10

- 75Mbps = approx 60% C10

- 80Mbps = 0% C10 (hit a hard wall here)

When I enabled flow tables for Test C, I eeked out a little bit more:

- 80Mbps = approx 60% C10

- 85Mbps = approx 45% C10

- 90Mbps = 0% C10 (hit a hard wall here)

TEST D: To test the impact of an increasing number of connections, I fired up a torrent client and added a bunch of torrents with a capped global download speed of 1MB/s.

- 0 connections = approx 80% C10 (household traffic only)

- 16 connections = varied 36-39% C10

- 32 connections = varied 33-35% C10

- 64 connections = varied 26-29% C10

- 128 connections = varied 21-29% C10

- 256 connections = approx 20% C10

- 512 connections = approx 15% C10

- 1024 connections = approx 5% C10

...I tried flow tables at various points. No positive difference.

I came across a few interesting discoveries along this journey.

First, flow tables didn't help much at all. If anything, it seemed that online speed tests seemed to peak a bit less. Maybe it's something specific to the Intel i350-T4 I used (in a Debian bare metal H770 and an OpenWRT bare metal J4005).

Second, OpenWRT in a container wasn't a pleasant experience. I had weird issues cropping up where some connections were solid and others struggled. Perhaps with enough tweaking and coaxing it could be made to work smoothly. I found that a VM ate 2-2.5% CPU full-time on a bare install and wasn't easy on the C-states so I didn't chase that one further.

Third, and this is very obscure and likely specific to the ASUS H770 or perhaps my combination of hardware or perhaps even the linux kernel I ran... if the built-in Realtek NIC was enabled in the BIOS but was NOT activated (via a systemd-networkd .network file), having another network card installed and activated caused the system to spend 50% of the time in C3. By "activated", I mean even something as simple as a [Match]name=X with the rest being empty. I tried an i210, i340 and i350. When using the i350-T4, I noticed that a corresponding SMBUS item in powertop also disappeared after I'd disabled the onboard NIC and moved the card to the second PCIEx1 slot. Sometimes it seems like ASUS has some gremlins running around on the PCIE bus.

I followed your build and bought the same motherboard with a i3-13100 cpu.

I have one issue that I can't resolve and I don't know where / what to look for.

I have installed ubuntu 22.04 and tried with 23.04 but the issue is still the same :

Whenever I tried to ping http://www.google.com I have an issue AS SOON AS I remove my keyboard and mouse:

- either "ping sendmsg no buffer space available" with drivers r8168

- or "pcie link is down" with drivers r8125

I have removed every power management option I could find.

I tried to plug in another usb device.

Any clues ?

- Check output of "dmesg" for any associated events.

- See if it happens when booting via the 22.04/23.04 LiveCD or if it's only post-install.

- Try Ubuntu 23.10 (I *think* the release version shipped with the kernel that disabled ASPM on the RTL8125) - either LiveCD or install depending on results of #1.

- Try a different set of USB ports, do 1 at a time to narrow down whether it's keyboard or mouse (or both), try a different brand keyboard/mouse to see if it makes a difference.

- Unplug/replug the network cable to see whether the network comes back up or if it's down for good.

- Disable "F1 for error" in the BIOS and try booting to the OS without keyboard/mouse plugged in, then see what happens when it's plugged in and unplugged.

- If you have a PCIe network card around (ideally non-Realtek for this test), see if it suffers from the same issue.

Perhaps someone else who has hit the same issue will reply. This isn't a situation I'd have encountered as I ended up leaving my keyboard plugged in (eventually swapping to a wireless keyboard which didn't mind being slept by powertop). Could always be a weird issue with defective hardware.CPU: i5-12600K

PSU: Corsair RM750e

NVMe: Samsung 980 Pro

RAM: 2x16 GB DDR5

OS: Ubuntu 23.04 Server (running off USB)

I initially bought a ASRock B760M Pro RS. After tuning the BIOS and even after force-ably enabling ASPM on the Realtek card, I couldn't get lower the PC-3. My total wattage was about ~15 watts. Not terrible but this machine was for a new home server that would be 24x7 and knew it could be better. I emailed ASRock, as their BIOS does not explicitly setting PC state values, it just has a tri-state of Auto, Enabled and Disabled, on if they plan on adding support and heard nothing back. So I was done with them.

I returned the ASRock and switched to a ASUS Prime B760-M-A. I configured the BIOS and ran powertop. ASPM L1 was working on the Realtek without user changes. I was at about 11 watts. After unplugging the DP cable and USB wireless KB/mouse it dropped down to 7.2 watts. Awesome! It was able to down to PC10 and the system seems very stable. Pretty incredible how far desktop computers have come for power usage.

just did a build with an Asus Prime b760M d4 build and had exactly the same findings on my motherboard. Have contacted Asus too see if they have any feedback regarding the cpu connected pci/m2 slot. I get to C6 when having an intel I226-v in x16 slot and a samsung 970 evo in cpu m2 slot.

However my X710-da2 network card I have to run in x4 slot since if used in x16 package c-state goes only to c2/c3.

I have no issues with my i226-v nic.

My usecase was to build a low power 10 gigabit router. Currently running OPNsense, but may switch to Vyos for lower system load.

I tried with

1. intel x520-da2(board from china), which does not support aspm

2. melanox connectx4(board from china), which support aspm but only allow c3

3. randome i226 nic from china-which support aspm, but can't enable aspm

So. can you let me know exact model or where you bought your i226v-nic please?

I used this one. https://www.aliexpress.com/item/1005005929535264.html

I'm sure you've heard this before but thank you for your informative posts and time.

100% new to this all and it's a bit overwhelming to be honest, but the thought of owning a server is incredibly appealing. I approached this all exactly as I did when I started out pc building, a bunch if youtube videos, read a bunch of articles and sort of muddle my way through. Researching server builds it's immediately apparent you actually need to know what you're doing and reading your articles makes this even more starker.

What are your suggestions for a plex/jellyfin server, always on, low power, full transcoding capabilities. Folks either seem high or low on products like synology and QNap which gives me pause. Thanks once again

Thanks for the great write-up! This year I have built myself a low-powered NAS to replace my pre-built QNAP TS-351 (Intel J1800) and a Gigabyte Brix J4105. My requirements were that this new system needed to consume less power on average than both of those systems combined and have far supirior performance. With the help of your (previous) articles, the Dutch Tweakers forum, the German Hardwareluxx forum, the unRAID forum and some other sources I came up with the following:

- Intel i5-13500

- Gigabyte B760M GAMING X DDR4

- Crucial Pro CP2K16G4DFRA32A

- be quiet! Pure Power 12 M 550W

- 1x Toshiba MD04ACA50D 5TB (from my NAS)

- 2x Toshiba MG07ACA14TE 14TB (from my NAS)

- Crucial P1 1TB (NVMe)

- Samsung 980 1TB (NVMe)

- Crucial BX500 1TB (backup connected through USB)

- Transcend SSD230S 2TB (SATA SSD)

- Philips OEM USB2.0 drive (boot drive)

With this setup I currently run almost 50 Docker containers with various applications and databases and I can reach 17W from the wall at idle. Everything spun down and no services accessed, except SSH. Which I'm pretty pleased with. Package C8 can be reached, especially when most applications aren't doing much or when I stop them. When I stop everything I can reach 11W at the lowest on unRAID.

Another thing I (and several others) have noticed on Intel 600/700 was that using USB2.0 Serial devices like Zigbee or Z-Wave devices increased power consumption by a lot. Something like 5-7W. I currently use ser2net on a Pi to circumvent this. I reached out to Gigabyte and Intel but both denied that this was an issue.

I also utilize E-cores for most apps as this saved me 1-2W on average. Some goes for system processes which I tend to move to those cores with taskset. Which seems to go over pretty good.

Regarding the Realtek NIC, I recently tried the 'native' Realtek driver that's available in the apps store but that disabled L1 completely for me. Resulting in an additional 4W. Reverting to the kernel one and forcing L1 with: `echo 1 > /sys/bus/pci/devices/0000\:04\:00.0/link/l1_aspm` works.

If you have any questions, you can always reach out. Have a great new years <3!